You can use similar syntax to access any of the values in the regression output. The p-values are shown for each regression coefficient in the model. Or we could use the following code to access the p-value for each of the regression coefficients: #view p-value for all variablesĠ.002175313 0.022315418 0.208600183 0.178471275

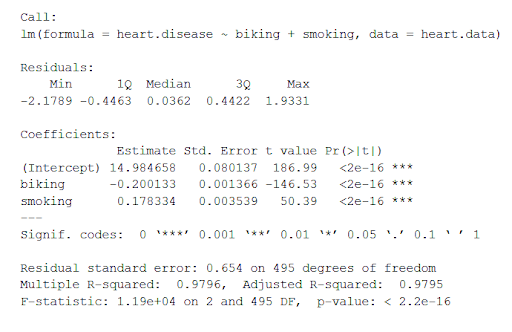

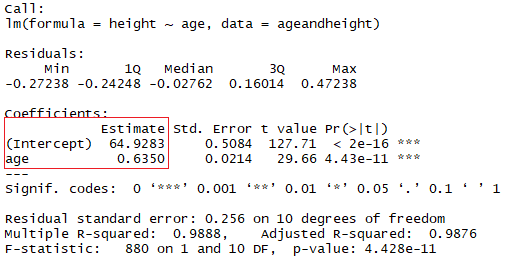

We can also access specific values in this output.įor example, we can use the following code to access the p-value for the points variable: #view p-value for points variable To view the regression coefficients along with their standard errors, t-statistics, and p-values, we can use summary(model)$coefficients as follows: #view regression coefficients with standard errors, t-statistics, and p-values We can use these coefficients to write the following fitted regression equation: To view the regression coefficients only, we can use model$coefficients as follows: #view only regression coefficients of model Residual standard error: 3.193 on 3 degrees of freedom In this video, we: - use R's built-in 'summary' function to output a summary table of a fitted regression model - walk through each part of. The indicator variables for rank have a slightly different interpretation. For each one unit increase in gpa, the z-score increases by 0.478. For a one unit increase in gre, the z-score increases by 0.001. (Intercept) 66.4355 6.6932 9.926 0.00218 ** The probit regression coefficients give the change in the z-score or probit index for a one unit change in the predictor. Suppose we fit the following multiple linear regression model in R: #create data frame This tutorial illustrates how to return the regression coefficients of a linear model. Example: Extract Regression Coefficients from lm() in R Extract Regression Coefficients of Linear Model in R (Example). The following example shows how to use these methods in practice. Method 2: Extract Regression Coefficients with Standard Error, T-Statistic, & P-values summary(model)$coefficients Method 1: Extract Regression Coefficients Only model$coefficients

However, we can create a quick function that will pull the data out of a linear regression, and return important values (R-squares, slope, intercept and P value) at the top of a nice ggplot graph with the regression line.You can use the following methods to extract regression coefficients from the lm() function in R: Ggplot(iris, aes(x = Petal.Width, y = Sepal.Length)) +

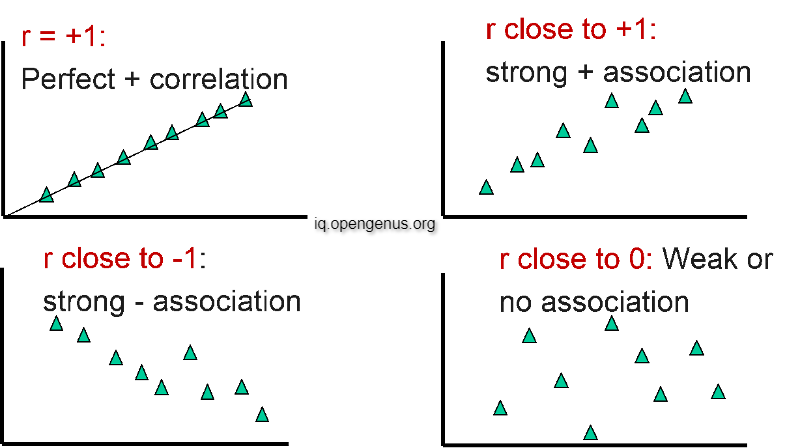

This can be plotted in ggplot2 using stat_smooth(method = "lm"): Plot(Sepal.Length ~ Petal.Width, data = iris) Multiple R-Squared: Percent of the variance. # Multiple R-squared: 0.669, Adjusted R-squared: 0.667 Summary: Residual Standard Error: Essentially standard deviation of residuals / errors of your regression model. The coefficient of determination R-squared, defined as ratio of the variance of the model to the variance of the response, is an indicator of goodness of fit of. # Residual standard error: 0.478 on 148 degrees of freedom codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 Regression analysis, like most multivariate statistics, allows you to infer that there is a relationship between two or more variables. Normally we would quickly plot the data in R base graphics: fit1 |t|) # Sepal.Length Sepal.Width Petal.Length Petal.Width SpeciesĬreate fit1, a linear regression of Sepal.Length and Petal.Width. Let's try it out using the iris dataset in R: data(iris) Here is a quick and dirty solution with ggplot2 to create the following plot:

Sometimes it's nice to quickly visualise the data that went into a simple linear regression, especially when you are performing lots of tests at once.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed